About This Manual

This manual was written during a 5 day Book Sprint in the Haus der Kulturen der Welt, Berlin (August 10-15, 2009).

Present were :

Jan Gerber

Homes Wilson

Susanne Lang

David Kühling

Jörn Seger

Plus many people helping online. Many thanks also to Leslie Hawthorn and Google for supporting this sprint, and to the Berlin Summercamp. This book is an ongoing effort...please feel free to improve it!

Register at FLOSS Manuals:

http://en.flossmanuals.net/register

2. Contribute!

Select the manual http://en.flossmanuals.net/bin/view/TheoraCookbook/WebHome and a chapter to work on.

If you need to ask us questions about how to contribute then join the chat room listed below and ask us! We look forward to your contribution!

For more information on using FLOSS Manuals you may also wish to read our manual:

http://en.flossmanuals.net/FLOSSManuals

3. Chat

It's a good idea to talk with us so we can help co-ordinate all contributions. We have a chat room embedded in the FLOSS Manuals website so you can use it in the browser.

If you know how to use IRC you can connect to the following:

server: irc.freenode.net

channel: #flossmanuals

4. Mailing List

For discussing all things about FLOSS Manuals join our mailing list:

http://lists.flossmanuals.net/listinfo.cgi/discuss-flossmanuals.net

What is Digital Video?

Video is a series of images that appears as an image in motion. The first "videos" (films) were literally a series of photographs, illuminated one-by-one at a rate fast enough to trick the human eye. Digital video can be thought of as a series of images but usually the reality is more complex.

While it is possible to store videos as a series of still images (frames), this is a somewhat wasteful approach as a lot of information must be stored. Surely there must be a better way! Well, when we look at frames in a movie we quickly see that only parts of a moving image change from one frame to the next while the rest of the image stays exactly the same. If the image is of someone walking, for example, perhaps they move while the background stays the same. So why not simply describe what changes from one frame to the next, since a little description is much less information than a whole image itself?

In fact, that's what digital video does. And in a world with limited disk space and network connections, simple ideas like this one can let you store hundreds of videos on your computer, instead of a handful or download a video in minutes, instead of hours.

In addition to this technique, digital video employs other methods to reduce the amount of data in a video. Digital video often uses tricks such as describing regions of similar color instead of describing each point one at a time -similar ways are used for describing images. Or they carefully describe certain parts of the image where lots of detail or movement is happening, for instance the part you're probably staring at, while giving less attention to the boring stuff at the edges. These ideas are not new, but they are improving rapidly with exciting consequences for producers of online video.

What is Theora?

Theora is a video technology for creating, editing, manipulating, and playing video. This type of technology is often referred to as a video format or codec (a portmanteau of coder-decoder). Theora is a free video format, meaning that anyone is free to use, study, improve, and distribute it without needing permission. Some parts of Theora are patented, but the owners of those patents have granted a permanent, irrevocable, royalty-free patent license to everyone.

Because distribution and improvement of Theora is not limited by patents, it can be included in free software. Distributions of GNU/Linux-based operating systems, such as Ubuntu, Debian GNU/Linux, or Fedora, all include Theora "out-of-the-box". And free software web browsers like Firefox and Chrome support Theora. If we consider the six major usage share of web browsers statistics of July 2009, approximately 25% of Internet users across all of these statistics are using Firefox and 2.5% are using Chrome as their browser. This means that every day a huge number of people are using software capable of playing Theora video.

History

Theora is based on an older technology called VP3, originally a proprietary and patented video format developed by a company called On2 Technologies. In September 2001, On2 donated VP3 to the Xiph.Org Foundation under a free software license. On2 also made an irrevocable, royalty-free license grant for any patent claims it might have over the software and any derivatives, allowing anyone to build on the VP3 technology and use it for any purpose. In 2002, On2 entered into an agreement with the Xiph.Org Foundation to make VP3 the basis of a new, free video format called Theora. On2 declared Theora to be the successor to VP3.

The Xiph.Org Foundation is a non-profit organization, that focuses on the production and mainstreaming of free multimedia formats and software. In addition to the development of Theora, they developed the free audio codec Vorbis, as well as a number of very useful tools and components that make free multimedia software easier and more comfortable to use.

After several years of beta status, Theora released its first stable (1.0) version in November 2008. Videos encoded with any version of Theora since this point will continue to be compatible with any future player.

A broad community of developers with support from companies like Redhat and NGOs like the Wikimedia Foundation continue to improve Theora.

The Web

Support for Theora video in browsers creates a special opportunity. Right now, nearly all online video requires Flash, a product owned by one company. But, now that around 25% of users can play Theora videos in their browser without having to install additional software, it is possible to challenge Flash's dominance as a web video distribution tool. Additionally, the new HTML5 standard by the W3C (World Wide Web Consortium) adds another exciting dimension — an integration of the web and video in new and exciting ways that complement Theora.

Patents and Copyright

The world of patents is complicated, leaving plenty of room for Theora's competitors to spread fear, uncertainty, and doubt ("FUD") about its usefulness as a truly free format. In essence, Theora is free. It is free for you to use, change, redistribute, implement, sell or anything else you may like to do with it. But it's important that supporters of free formats understand the questions that arise.

One commonly spread fear is that Theora infringes on submarine patents — patents nobody knows about yet that the makers of Theora never had the authority to use. However it is also true that all modern software could infringe on submarine patents — everything from Microsoft Word to the Linux kernel. However millions of people and entire industries still use these tools. In other words, submarine patents are a problem for the software industry as a whole, however, this doesn't mean that the software industry should stop developing software.

It is also important to note that in a worst-case scenario, even if a submarine patent "emerged", Theora could probably work around it; as this sort of thing happens all the time. Large organizations like the Mozilla Foundation and Wikipedia have examined this issue and have come to this same conclusion.

The software produced by Xiph.org is also subject to copyright, and made available under free software licenses. Xiph.org provides code so that anyone can include it in any application. Xiph.org also provides a set of tools for working with Theora files. This means you can study, modify, redistribute and sell anything you make using Theora or any of the tools provided with it.

Other Free Video Formats

It is worth noting that there is another project creating a royalty-free, advanced video compression format. It is called Dirac. Originally created by the BBC Research department, Dirac will in the future try to cover all applications from Internet streaming to Ultra-high definition TV and expand to integrate with new hardware equipment technologies. Nevertheless, Ogg Theora lends itself extremely well to online (streaming) video distribution, whereas Dirac will likely become a better choice for sharing video files that are of high-definition footage.

Codecs

To work with digital video it often helps to know a little bit about the technology, so lets look at some of the basic concepts behind digital video.

A codec is a mathematical formula that reduces the file size of a video or audio file. Theora is known as a video codec since it works exclusively with video files.

When a codec reduces a file size of a video file, it is also said to be compressing the file. There are two forms of compression that are of interest here - Lossless and Lossy Compression.

- Lossless compression - This is the process of compressing data information into a smaller size without removing data. To visualise this process imagine a paper bag with an object in it. When you remove the air in the bag by creating a vacuum the object in the bag is not affected even though the total size of the bag is reduced.

- Lossy compression - Sometimes called 'Perceptual Encoding', this is the process of 'throwing away' data to reduce the file size. The compression algorithms used are complex and try to preserve the qualitative perceptual experience as much as possible while discarding as much data as necessary. Lossy compression is a very fine art. The algorithms that enable this take into account how the brain perceives sounds and images and then discards information from the audio or video file while maintaining an aural and visual experience resembling the original source material. To do this the process follows Psychoacoustic and Psychovisual modeling principles.

Quality

The quality of digital video is determined by the amount of information encoded (bitrate) and the type of video compression (codec) used. While there are some codecs that can be considered to be more advanced than Theora, the difference in perceived quality is not significant.

Bitrate and quality

Since digital video represents a moving image as information, it makes sense that the more information you have, the higher the quality of the moving image. The bitrate is literally the number of bits per second of video (and/or audio) used in encoding. For a given codec, a higher bitrate allows for higher quality. For a given duration, a higher bitrate also means a bigger file. To give some examples, DV cameras record video and audio data at 25Mbit/s (a Mbit is 1,000,000 bits), DVDs are encoded at 6 to 9 Mbit/s, internet video is limited by the speed of broadband connections: many people have 512kbit/s (a kbit/s means 1,000 bits delivered per second) or 1Mbit/s lines, with 16Mbit/s connections becoming more common recently. Right now, around 700kbit/s is commonly used for videos embedded on web pages.

There are many reasons to want a lower bitrate. The video may need to fit on a certain storage medium, like a DVD. Or you may want to deliver the video fast enough for your audience, whose average internet connection speed is limited, to be able to watch it as they receive it.

Different kinds of video may require different bitrates to achieve the same level of perceived quality. Video with lots of cuts and constantly moving camera angles requires more information to describe it than video with many still images. An action movie, for example, would require a higher bitrate than a slow moving documentary.

Most modern codecs allow for a variable bitrate. This means that the bitrate can change over time in response to the details required. In this case, a video codec would use more bits to encode 10 seconds of quick cuts and moving camera angles than it would use to encode 10 seconds of a relatively still image.

Codecs and quality

Codecs reduce the necessary bitrate of a media file by describing the media in clever, more efficient ways. Video codecs describe the changes between one frame and the next, instead of describing each frame separately. Audio codecs ignore certain frequencies that the human ear doesn't notice. Just as simple techniques can dramatically reduce the bitrate and size of the file, more sophisticated techniques can reduce it even more. This is how some codecs can be considered superior to others.

When codecs use complicated mathematical techniques to encode video, you need a powerful chip to decode that video quickly enough for playback. This is a reason why sometimes it's best to use a simpler codec. Video encoded using state-of-the-art tricks may be unwatchable on an old computer, for example. Or it sometimes might be best to use an older, simpler video codec (as DVDs do) because the hardware required to play it will be cheaper.

Theora and quality

Thanks to recent work by the Theora community, Theora achieves a similar level of quality to other modern codecs like h.264, the patent-encumbered codec used by Apple, Youtube, and others. This can be a matter of some controversy, and there are reasons to consider h.264 technically superior in quality to Theora. But the best way to decide is to see for yourself.

These sites have side-by-side comparisons between Theora and h.264:

http://people.xiph.org/~greg/video/ytcompare/comparison.html

http://people.xiph.org/~maikmerten/youtube/

Containers

A container or wrapper is a file format that specifies how different streams of data can be stored together, or sent over a network together. It allows audio and video data to be stored in one file and played back in a synchronised manner. It also allows seeking in the data, by telling the playback software where the audio and video data is for certain points in time.

In addition to audio and video, containers may provide meta data about the data they contain, including the size of the frames, the framerate, whether the audio is in mono or stereo, the sample rate, and also information about the codecs used to encode the data.

When you play a digital movie that has sound, your player is reading the container, and decoding the audio and video using separate codecs. Theora video is usually stored or streamed together with Vorbis sound in the Ogg container, but it can be stored in other containers too. Matroska (.mkv) is another format people use for Theora video.

The difference between containers and codecs.

The three letter extension at the end of the file name refers to the container, not the codec. People often get confused about this. When a file ends in .mp4 or .avi, those are containers that could contain several different combinations of audio and video streams. Certain containers don't work with certain codecs, and certain codecs work best with certain containers. But you can't tell for sure what codecs a video file requires by looking at the file extension.

Playing Theora

To play Ogg Theora videos you need a video player that supports Ogg Theora playback. Often to playback some types of video you need to install obscure software which can be very frustrating and time consuming. Fortunately, several video players can play Ogg Theora without the need to install anything else. The two easiest players to use are notable because they work the same across all of the major Operating Sytems (GNU/Linux, Mac OS X, Windows).

VLC is a free software video player that plays many different types of video files, including Theora. You can get it here online at http://www.videolan.org/vlc

Miro is another video player that supports Ogg Theora video (http://getmiro.com).

MPlayer is another free software player that supports Ogg Theora video (http://www.mplayerhq.hu/).

If you don't have any of them or don't want to install them, you can also use the Firefox web browser versions 3.5 and later (http://getfirefox.com) as a Theora viewer.

As a rule of thumb, if the video is on your desktop, use VLC, MPlayer or Miro. If the video is already on the web, use Firefox 3.5 or later.

Integrating Theora

If you want to use Theora with other softwares such as Windows Media Player or QuickTime Player, you need to install components or filters that will enhance the functionality of Theora. This doesn't apply to you if you use GNU/Linux, since Theora is natively supported in most distributions and plays with Totem and other GStreamer based applications.

For Windows and Mac OS X you can add full functionality and support of Theora to all QuickTime based applications, such as the QuickTime player itself, but also iTunes or iMovie. All you need to do is install the Xiph QuickTime Components (XiphQT) that can be downloaded from the Xiph.Org Website: http://xiph.org/quicktime/download.htm

The other useful filter a Windows user might be interested in is the Directshow Filter. It is also offered from the Xiph.Org Foundation and adds encoding and decoding support for Ogg Vorbis, Speex, Theora and FLAC for any Directshow application, such as Windows Media Player. You can also download the filters from the Xiph.Org Website: http://xiph.org/dshow/

VLC

VLC (which stands for VideoLAN Client) is an excellent tool for playing video and audio files. It is free software, it plays a wide range of formats including Theora, and it runs on a variety of platforms. If you use Windows, Mac OS X, or GNU/Linux (eg. Ubuntu) then VLC is a great option for you. VLC also works the same across each Operating System so if you know how to use it in Windows (for example) you know how to use it under Ubuntu. VLC's flexibility and reliability make it one of the most popular free software video tools.

Installing

VLC is a desktop application that you need to download and install. Installation steps will vary depending on what platform you're using (GNU/Linux, Mac OS X, or Windows).

This page has links to download VLC, and instructions for various platforms:

http://www.videolan.org/vlc/

There is also a good manual about how to use and install VLC linked from the FLOSS Manuals website.

Playing Video

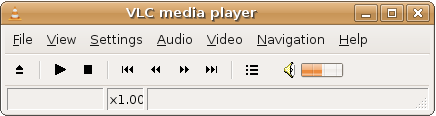

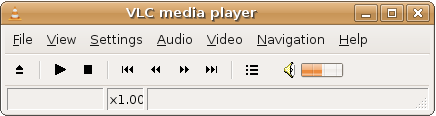

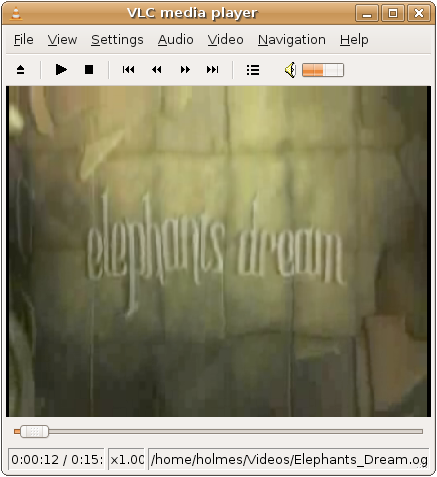

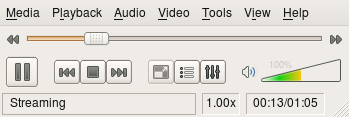

- Run VLC. You will see a window that looks more or less like this:

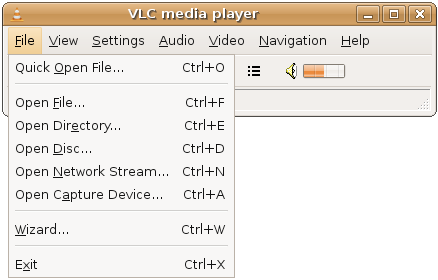

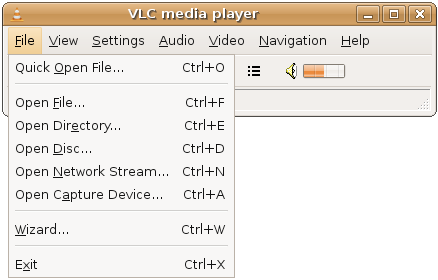

- Go to File > Quick Open File in the VLC menu.

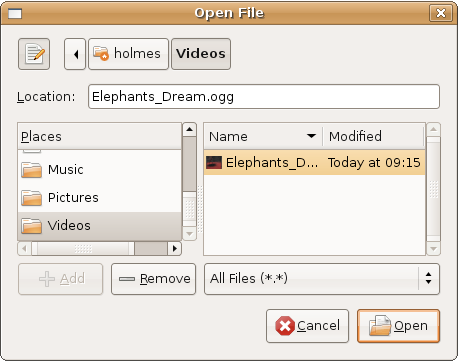

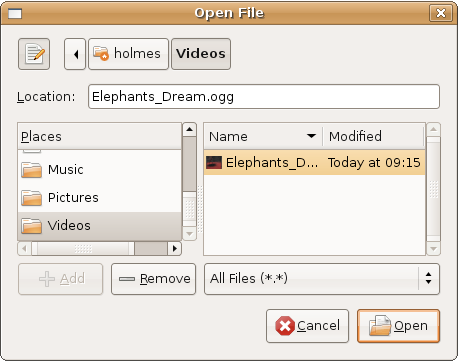

- Find the Theora file you want to open. Select it, and click "Open".

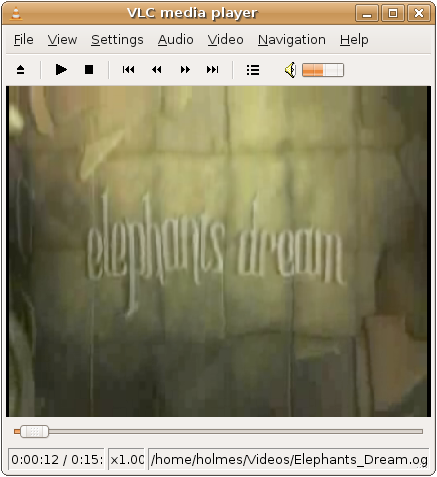

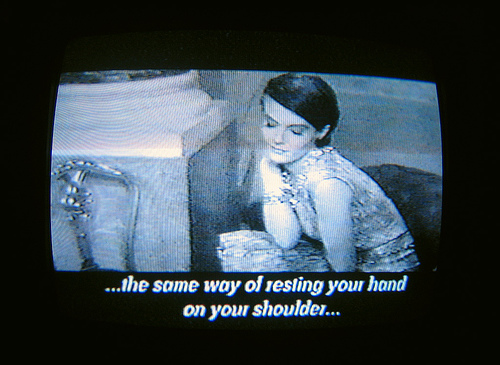

- A video will appear in your window like this, and the video should begin playing.

Basic Playback Controls

- As in most media players, you can drag the playback position indicator to move forward or backward in the video.

- For full-screen playback, double click on the video. To exit fullscreen, double click again.

Miro

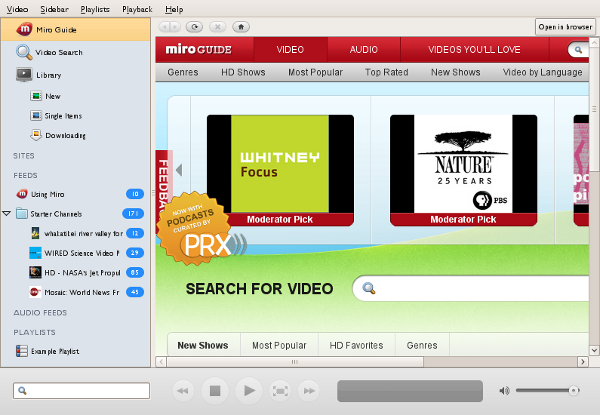

Miro (previously called Democracy TV) is a tool for finding, downloading and watching video from a wide range of online sources. It is free software made by the nonprofit (NGO) Participatory Culture Foundation : http://participatoryculture.org/.

Miro plays a wide range of formats including Theora, and it runs on a variety of platforms (Operating Systems such as Windows, OSX, and GNU/Linux). In addition to video playback, Miro makes it easy to search for and download videos from specially formatted lists of videos known as podcasts or vodcasts.

You can use Miro to play video on your desktop, or to download and watch video from a URL pointing directly to the video file, or even a popular video website like YouTube. You can also use Miro to download and then watch Bittorrent files (files ending in .torrent).

Installing

Miro is a desktop application that you need to download and install. Installation steps will vary depending on what platform you're using (GNU/Linux, Mac OS X, or Windows).

This page has links to download Miro and instructions for various platforms:

http://getmiro.com

Playing A Video

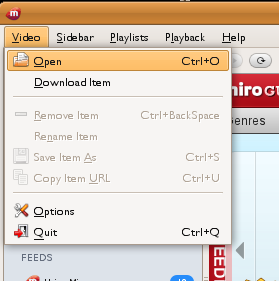

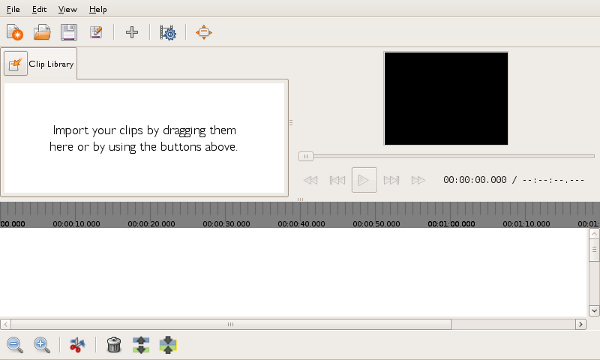

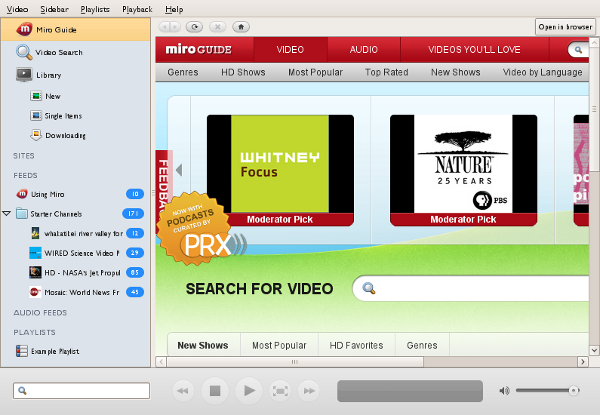

- Run Miro. After clicking through a few messages that display the first time Miro runs, you will see a window that looks like this:

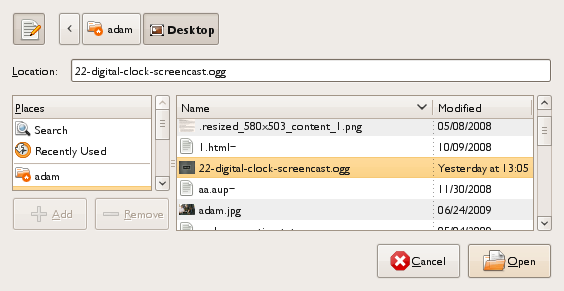

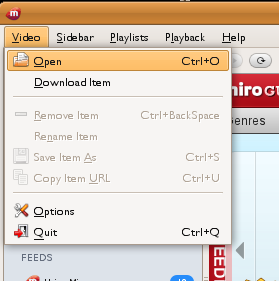

- Go to Video > Open in the Miro menu.

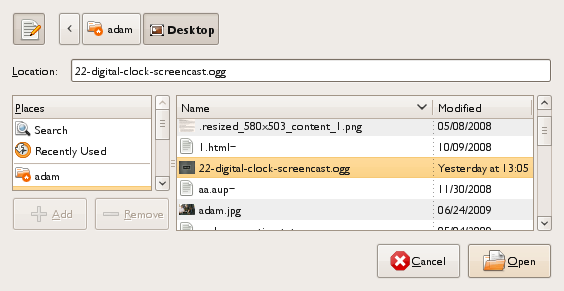

- Find the file you want to open. Select it, and click "Open".

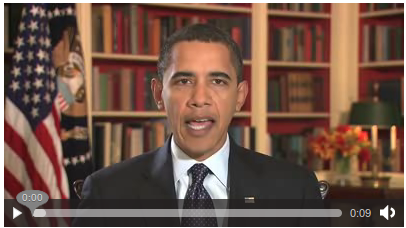

- The video should begin playing in the Miro Window.

Introduction

There are a few things to consider before you put your video online.

Keep in mind that in order for viewers to watch your video, they need to have a high-bandwidth connection. The higher the video quality the higher the bandwidth needed to access the video. This means that sometimes your target audience may not be able to access video online, and hence you may wish to consider another strategy such as distribution of the video on media like CD, DVD, or USB storage sticks.

If your audience has a fast internet connection you may wish to offer the video either for download or for playing from your website. Each strategy has its strengths depending on what it is you hope to achieve.

Replaying on your website :

- you can define the context in which the video is being presented

- it is convenient for your audience to just click and play the video

- it is quite handy for your site visitors to just click and watch a small part of the video and not have to download a huge amount of data just to get an impression of your work

- it is easy for your audience to show your work to others, for instance by sending around a link to the video they want to point to

Offering your video for download :

- your audience can share it by file sharing networks (some see this as a weakness)

- they can play it as many times as they wish without consuming extra bandwidth

- they can edit it

- they can show it offline (eg. movie screenings)

- you can provide a higher quality video that is not possible to play in a website due to browser or bandwidth constraints

You could also choose to offer both strategies: an option to watch the video on your website and an option to download the video. You could even choose to upload two different versions of your video: one encoded in a quality more optimized for the web (something like a preview version of your film in a smaller size and poorer quality) and one video for people to download in a better quality and larger size and resolution.

If you want to share your Video on your website you have a few options. One is using the great HTML 5 video tag. If that is not an option for every viewer since some might use old browsers, you might want to also offer a fallback solution: the Cortado player (software you can embed in your webpage to play Theora).

If you don't have access to a server to store and deliver video, you can also use one of the many available hosting sites, that offer free hosting of Theora video (you don't want to upload your video to hosting sites such as Youtube since you would lose quality, and you would also wave some of your rights on the material).

Another strategy is to offer your video for download with bittorrent. Bittorrent is a peer-to-peer filesharing protocol that shares the internet connection of a respective number of computers that download the same file. Hence, the more people that download your video with bittorrent the faster the transfer will become for other users. Bittorrent is probably the most economic way to transfer large popular files on the internet.

HTML5 Video

If you create video, you may wish to display the content in a webpage. The code you use to create webpages is governed by a set of rules known as HTML, and there is recently a new version of this these rules called HTML 5.

HTML 5 introduces a video tag. A 'tag' is a few lines of HTML code that instructs the browser to display something or do something. The HTML5 video tag allows simple integration of videos in a manner very similar to placing images in a webpage.

The video can also be displayed with very nice controls for play, pause, altering the audio volume, and scrolling through the timeline of the video.

Basic Syntax

Here is a basic example of a video embed tag using HTML 5 :

<video src="../video.ogv"></video>

The above example embeds the video file, 'video.ogv', into a webpage. The file in this example should be located in the same directory as the HTML file because the 'src' parameter refers to a local file. To reference a video file in another directory on the same webserver you need to provide the path information just as you would for an image file.

<video src="..//bin/edit/myvideofiles/video.ogv"></video>

You can also specify a file on another server:

<video src="../http://mysite.com/video.ogv"></video>

Parameters

Adding additional parameters provides more control over the video.

<video

src="../video.ogv"

width="480"

height="320"

autoplay

controls>

Your Browser does not support the video tag, upgrade to Firefox 3.5+

</video>

In this example the width and height of the video are provided. If you don't want the image to appear distorted it is important that you set the height and width dimensions correctly. autoplay means that the video will be played as soon as the page loads. controls specifies that the controls to pause or play the video (etc) are displayed.

It is possible to include text or other HTML content inside the video tag as fallback content for browsers that do not support the video tag.

Using your own controls / player skin

If you know a little Javascript you can control the playback quite easily. Instead of using the controls provided by the browser, it is possible to create your own interface and control the video element via JavaScript. There are two things you need to remember with this method :

- Do not forget to drop the controls attribute

- The video tag needs an id parameter like this :

<video src="../video.ogv" id="myvideo"></video>

Some JavaScript functions:

video = document.getElementById("myvideo");

//play video

video.play();

//pause video

video.pause();

//seek to second 10 in the video

video.currentTime = 10;

If you have multiple video tags in a single webpage you will need to give each a unique id so that the javascript knows which video the controls refer to.

A full list of functions and events provided by the video tag can be found in the HTML5 spec at http://www.whatwg.org/specs/web-apps/current-work/#video

Manual Fallback options

In the above, simple example, if the video element is not supported by the browser, it will simply fall back to displaying the text inside the video element.

Instead of falling back to the text, if the browser supports Java, it is possible to use Cortado as a fallback. Cortado is an open-source cross-browser and cross-platform Theora video player written in Java. The great thing is that the user doesn't need to download any extra Java packages as the applet uses the standard native Java in the browser. Cortado's home page can be found here :

http://www.theora.org/cortado.jar

You can download the jar file, or you can refer to it directly. The following is an example to embed cortado (not all paramters are required, but listed here to provide you an idea of possible options) :

<applet code="com.fluendo.player.Cortado.class"

archive="http://www.theora.org/cortado.jar"

width="352" height="288">

<param name="url" value="http://myserver.com/theora.ogv"/>

<param name="framerate" value="29"/>

<param name="keepAspect" value="true"/>

<param name="video" value="true"/>

<param name="audio" value="true"/>

<param name="bufferSize" value="100"/>

<param name="userId" value="user"/>

<param name="password" value="test"/>

</applet>

If you select to download the jar file as a fallback, make sure you put it (cortado.jar) and the above html page in the same directory. Then change the following line to include a reference (link) to your own ogg stream (live or pre-recorded) :

<param name="url" value="http://myserver.com/theora.ogv"/>

Now if you open the webpage in a browser it should play the video.

To use Cortado as a fallback, place the Cortado tag within the HTML5 video tag -- as in the following example:

<video src="../video.ogv" width="352" height="288">

<applet code="com.fluendo.player.Cortado.class"

archive="http://theora.org/cortado.jar" width="352" height="288">

<param name="url" value="video.ogv"/>

</applet>

</video>

Javascript Based Players

Some javascript libraries exist to handle fallback selection. These libraries enable simple embeding while retaining fallback to many players and playback methods across many browsers

Mv_Embed

The mv_embed library is very simple to use. A single JavaScript file include enables you to use the html5 video tag and have the attributes be rewritten to player that works across a wide range of browsers and plugins. More info on mv_embed

<script type="text/javascript" src="../http://metavid.org/w/mwScriptLoader.php?class=mv_embed"></script>

...

<video src="../mymovie.ogg" poster="mymovie.jpeg">

Itheora

ITheora is a PHP script allowing you to broadcast ogg/theora/vorbis videos (and audios) files. It's simple to install and use. Itheora includes documentation on their site on how to use their player and skins.

Support in Browsers

Right now, latest versions of Mozilla Firefox, GNU IceCat and Epiphany browsers support Theora natively. Opera and Google Chrome have beta versions available with Theora support. Safari supports the video tag, but only supports codecs through QuickTime - that means by default it does not support Theora. With XiphQT (http://xiph.org/quicktime) it is possible to add Theora support to QuickTime and thus Safari.

Hosting on your own site

Like images or HTML pages you can put your Theora videos on your own webserver.

Mime Types

For videos to work they have to be served with the right mime type.

A mime type is the name given to a way of identifying different file types delivered over the Internet. This information is usually delivered with the data. The extra information identifying what kind of information is being delivered is usually not readable by humans, but is interpreted by software so that the right kind of data is delivered and processed by the right kind of software. The information is sent in the header of the transported data.

A header is a small amount of meta data sent by one software to another which describes the kind of information being transported. Typical header information includes the length, destination, mime type etc.

Mime types have two parts - the type and subtype (although the two together is just referred to simply as a 'type'). The type is written in the form type/subtype. There are only four categories of type - audio, video, text, and application. There are innumerable subtypes.

The right mime type for Theora video is 'video/ogg'.

A current server should send the right information. If your server does not send the right headers, you have to change the configuration of your webserver. However, you should first consider if you know enough about configuring web services. If you are not feeling confident about this then perhaps enlist the support of a friendly techie. Assuming you are using the Apache webserver (the most popular webserver on the web), and you feel confident to change the server configuration, there are two ways you can do this :

- you can add two lines to your Apache webserver configuration

- you might be able to provide the extra settings by placing a .htaccess file in your video folder

For the first strategy you need access to your web server configuration file (httpd.conf). In this case all files being delivered by your webserver will send the correct information. This is the best solution. However if you do not have access to your webserver configuration files (for example, if you are using a shared hosting service) then you may wish to try the second strategy. The second strategy will only effect the video being served from the same folder that you put the .htaccess file.

For both strategies you need to enter this information in the appropriate file:

AddType video/ogg .ogv

AddType application/ogg .ogg

For httpd.conf and .htaccess files you can place this information at the end of the file. If you do not have an .htaccess file you can just create a blank file and add this information (no other information is required).

oggz-chop

Using Apache you can also get a lot more sophisticated. You can, for example, enable the use of URLs that reference and playback only part of any given Theora video file. If you want to include only parts of your video in your webpage or allow linking to a specific time in the video, you can use oggz-chop on the server. With oggz-chop installed you can address segments of the video by providing an offset parameter in the url. To include second 23 to second 42, of your video you would use

<video src="../http://example.com/video.ogv?t=23.0/42.0"></video>

Installing oggz-chop requires the action module to be enabled in Apache and oggz-chop installed on the server. Describing how to enable the action module is beyond the scope of this document, so you may better consult with an Apache guru, or read some documentation on this subject before attempting it.

However...if you know you have Apache2 installed and you have administrator access to your server you could try this command to install the actions module :

sudo a2enmod actions

Try it at your own risk...

oggz-chop is part of oggz-tools, you can (probably) install that with :

sudo apt-get install oggz-tools

With those installed you have to enable oggz-chop with those two lines in your Apache configuration or .htaccess file:

ScriptAlias /oggz-chop /usr/bin/oggz-chop

Action video/ogg /oggz-chop

Allowing Remote Access

Videos, unlike images, can not be embedded from remote sites, if those sites do not specifically allow this. To allow other sites to include your videos on their domain add this line to your Apache configuration or .htaccess file:

Header Set Access-Control-Allow-Origin "*"

With this setting, the server responds with an additional header 'Access-Control-Allow-Origin: *' which means that the videos can be embedded in any webpage. If you want to restrict access to the videos (for example, to be only accessible from

http://example.org) you have to change it to:

Header Set Access-Control-Allow-Origin "http://example.org"

Note that now, your videos can not be embedded on domains other than example.org. The Access-Control-Allow-Origin header can also contain a comma separated list of acceptable domains.

https://developer.mozilla.org/En/HTTP_access_control has a more detailed description of http access control.

Serving Videos via a Script

As a final strategy. If you do not have control over your hosting setup but want to use videos anyway, it is possible to use a small PHP or CGI script to set the right headers and serve the video. Such a script could look like this:

<?php

$video = basename($_GET['name']);

if (file_exists($video)) {

$fp = fopen($video, 'rb');

header('Access-Control-Allow-Origin: *');

header('Content-Type: video/ogg');

header('Content-Length: ' . filesize($video));

fpassthru($fp);

} else {

echo "404 - video not found";

}

?>

If this script is placed as index.php in your video folder, you would use

http://example.com/videos/?name=test.ogv instead of directly linking to your video (

http://example.com/videos/test.ogv).

<video src="../http://example.com/videos/?name=test.ogv"></video>

Hosting Sites

There are many existing websites that will host your Theora content for free. You can then either link directly to the content from your own webpages, or refer people to the content hosted on the external site.

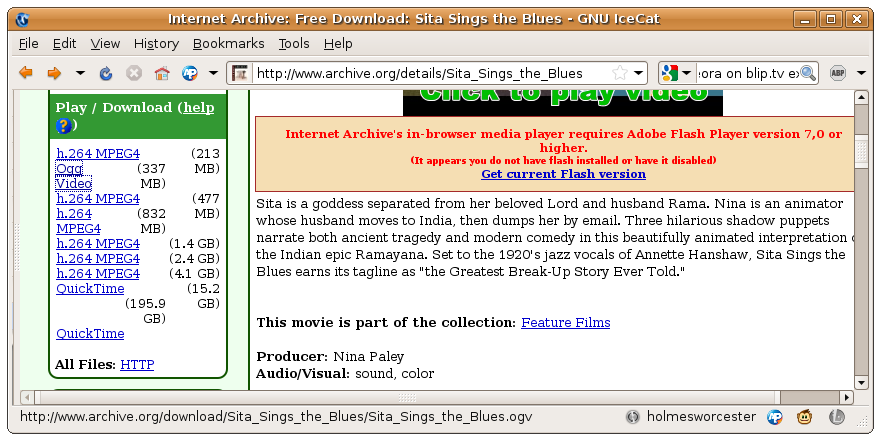

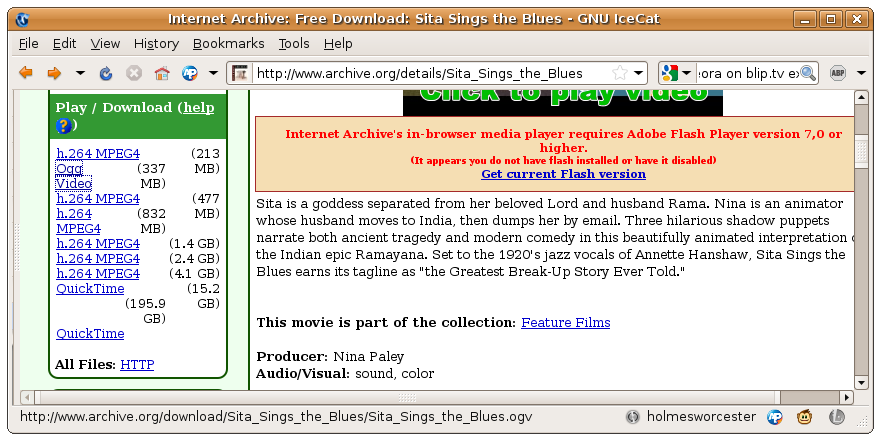

Archive.org

Archive.org (http://www.archive.org) is the website for the not-for-profit organization known as the Internet Archive, that focuses on the preservation of digital media. To post to the archive, you must also license the work under a Creative Commons (or similar) license. This is usually not a problem if you made the work yourself, but maybe an issue if you used copyrighted material (eg, music within a video) within your work, or if you are uploading something someone else made.

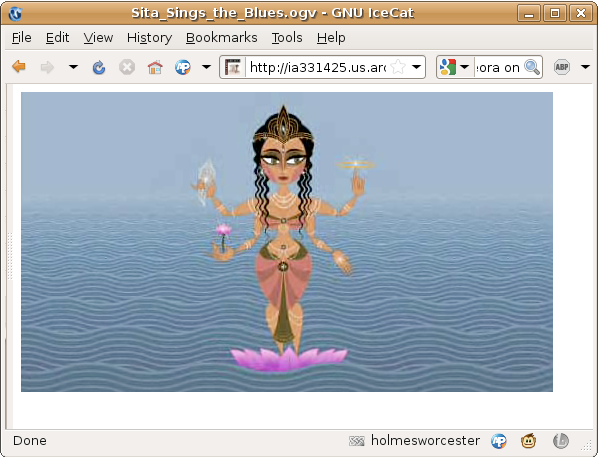

You can host Ogg Theora content at the Internet Archive for free, and then link to it from your own website. Archive.org will convert your video to Ogg Theora and many other formats when you upload it.

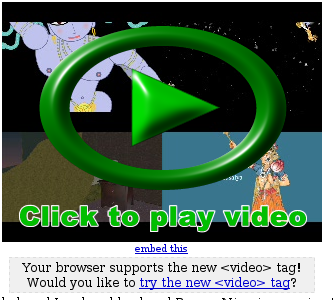

Note: when you visit a page on archive.org, it will notify you if your browser supports the video tag. If you see this message...

Simply reload the page and look for the message under the video icon:

At this point, click the link that says "try the new <video> tag" and click the following link to always use the video tag. Then your videos will display in Theora. If you'd like a direct link to the Theora version of the video, copy the "Ogg Video" link in the left sidebar. When you have that link, you can link directly to the video and anyone with a compatible browser will see the video play:

Dailymotion

Dailymotion (http://dailymotion.com/) is one of the larger video sharing sites, and recently they have been dabbling with Theora support. They have converted over 300,000 videos to Theora, and by applying for a "motion maker" account (and getting approved) you can publish videos there using Theora. Their experimental Theora portal here: http://openvideo.dailymotion.com/. Note: not all videos in the portal currently display in Theora, so be sure to verify that your videos are working before relying on this service.

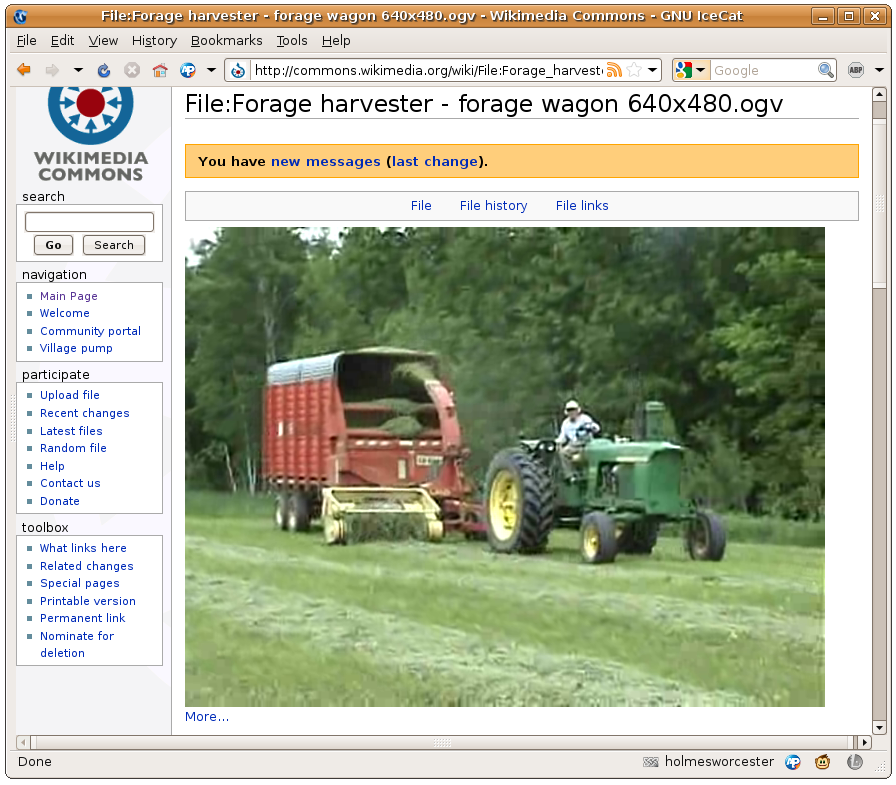

Wikimedia Commons

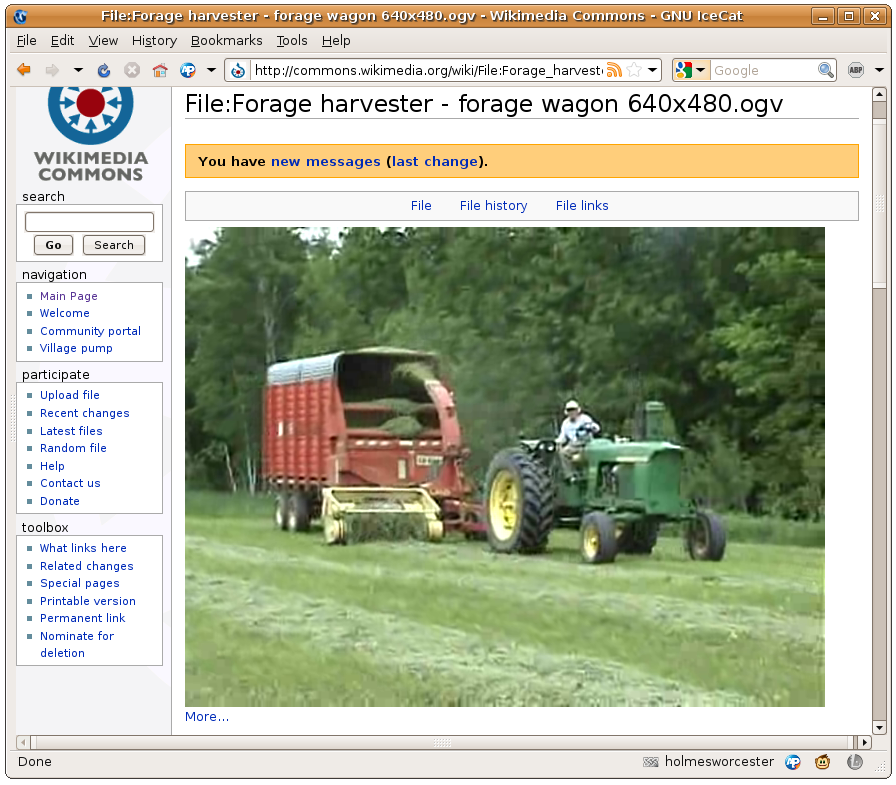

Wikimedia Commons ( http://commons.wikimedia.org/ ) is a website managed by the Wikimedia Foundation ( http://wikimediafoundation.org/ ), a non-for-profit organization that also manages Wikipedia. It is a database of media files available for anyone to use for any purpose. It's an open website that any can contribute to, which uses wiki software that allows for easy collaboration.

The site is managed entirely by volunteer editors, who also create the majority of its content by contributing their own work. The community is multilingual, with translators available for dozens of languages. It only collects material that is available under free content licenses or in the public domain. You can upload Ogg Theora files to Wikimedia Commons.

Introduction

Encoding is the process of creating a Theora file from raw, uncompressed source video material. In case the source video material exists in some non-raw, compressed form, an intermediate decoding step is needed before creating the Theora video. This Decoding-Encoding is often referred to as Transcoding, though often Encoding is used as a synonym.

Software programs performing the encoding (resp. transcoding) are called encoders. Various Theora encoders exist, for example ffmpeg2theora and VLC (http://en.wikipedia.org/wiki/Theora#Encoding), to name just a few.

Before encoding the user has to decide on at least two parameters:

- the image quality of the created Theora file

- the audio quality

Depending on the encoder used, more options might be available to control the encoding process:

- clipping a configured amount of the frames' borders during encoding

- rescaling the video resolution

- changing the frame-rate of the video

- handling the video-audio synchronisation

- setting the keyframe-interval

Video Quality, Bit-Rate and File Size

Most Theora encoders allow the user to directly specify the subjective quality of the encoded video, usually on a scale from 0 to 10. The higher the quality, the bigger the resulting Theora files. Most encoders can alternatively be configured to encode for a given average target bitrate. While this option is useful for generating Theora video files for streaming, it sometimes yields sub-optimal quality.

Recent versions of some Theora encoders feature a two-pass encoding mode. Two-pass encoding allows the encoder to hit a configured target bit-rate with optimum video quality, and should thus be comparable to quality controlled encoding, though it comes at the cost of taking twice the encoding time. By nature live videos can not be generated with two-pass encoding.

Video Resolution and Frame-Rate

There can be good reasons to further reduce the height and width (video resolution) of your video when encoding to Theora.

- If the Encoder produces too large files, even at low quality settings around 0, then reducing the video resolution will help reduce the file size further

- If your required maximum file size requires a very low quality setting of 0..3, leading to an unacceptable perceived quality then reducing the video resolution will mean more data can be dedicated to improving the quality

- If the playback of the encoded video should work even on low-performance computers then a lower video resolution will assist this

- If your source video material has resolution higher than standard-definition video. The Theora video codec is not designed for high-definition video and might not perform very well at it so it would be better to reduce the video resolution

If your source video material has an unusually low resolution, and you can spare the bits, increasing the video resolution during encoding might have a positive effect on overall video quality.

Adjusting the frame-rate during encoding is generally a bad idea, as it often leads to jerking, reducing perceived quality by a large amount. However, if you require a very low target file size, try reducing the frame-rate to exactly half the source frame rate. This might do the job of sufficiently reducing file size without degrading quality to an unacceptable level.

Clipping the Frames in the Video

Sometimes video source material does not make use of the full video frames, leaving black borders around the video. It is a good idea to remove black or otherwise unused parts from the video as this usually improves the quality and file size of the encoded Theora file. If possible, try to keep video width and height multiples of 16. The Theora format is capable of, but not very efficient at, storing video using other arbitrary frame sizes.

Video-Audio Synchronization

In an ideal world, the encoder would just copy the video-audio synchronization of the source video material to the created Theora file. In practice however, this is sometimes just not possible. Theora video files must adhere to a constant frame rate throughout the full file. Also the playback speed of the audio tracks is constant in Theora. Some source video material, however might not have a 100% constant frame rate. Sometimes frames are just missing from the source video due to recording errors or as a result of using video cutting software.

In these cases, the encoding process must actively adjust audio-video synchronization. This is done either by duplicating and/or dropping frames in the video, or by changing the speed of the audio tracks.

Keyframe-Interval

Many Theora encoders allow changing a parameter named keyframe interval. A larger keyframe interval reduces the target file size without sacrificing quality. Keyframes are those frames in the video, which a player can directly seek to during playback. To seek to other points in the video, all frames from the last keyframe on have to be decoded first. In a video with 24 frames/second, a keyframe interval of 240 implies that direct seeking is only possible with a granularity of 10 seconds. Also cutting and concatenation of the encoded video will be limited to the keyframe granularity.

As a rule of thumb, never set the keyframe interval to more than 10 times the target video frame rate.

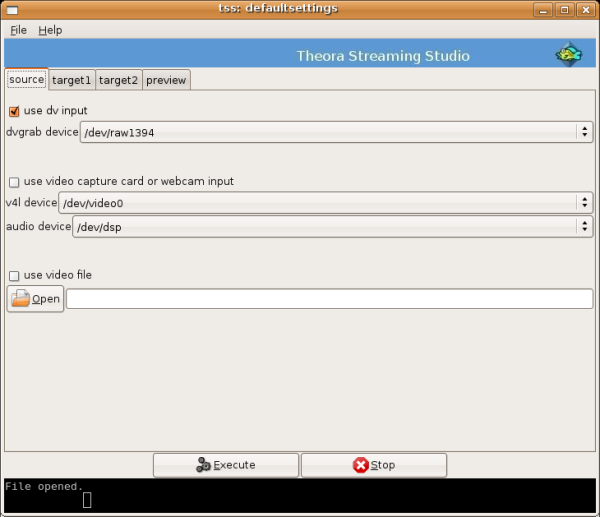

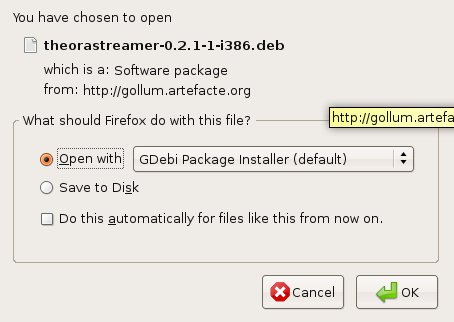

Firefogg

Firefogg is the name of an extension to the Firefox webbrowser that adds support for encoding your video files to Theora using a nice web interface. It also enables web-sites to provide a video-upload service that takes videos from your computer, converts them to Theora on-the-fly uploading the generated Theora file to a website.

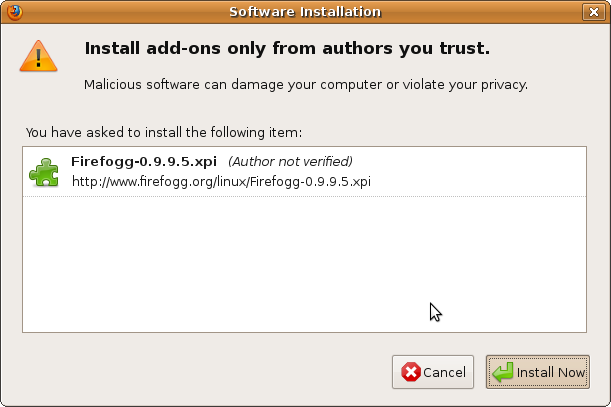

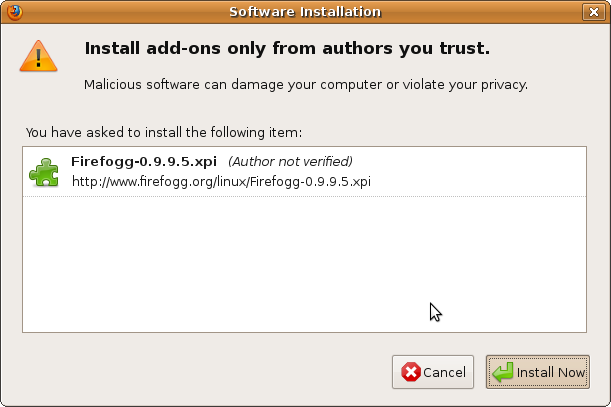

Installation

Firefogg requires the Firefox web browser, at least version 3.5. If your version of Firefox is older, or in case you do not have Firefox installed at all, visit www.mozilla.com to download an up-to-date version.

Once you have a recent Firefox version, use it to visit the Firefogg homepage at www.firefogg.org:

Now click on Install Firefogg. At the top of the page, Firefox now asks you to allow installation of the new software:

Click on Alllow, to pop up the following dialog:

Click on Install Now. After installation, you are asked to restart Firefox. Click on Restart Firefox to proceed.

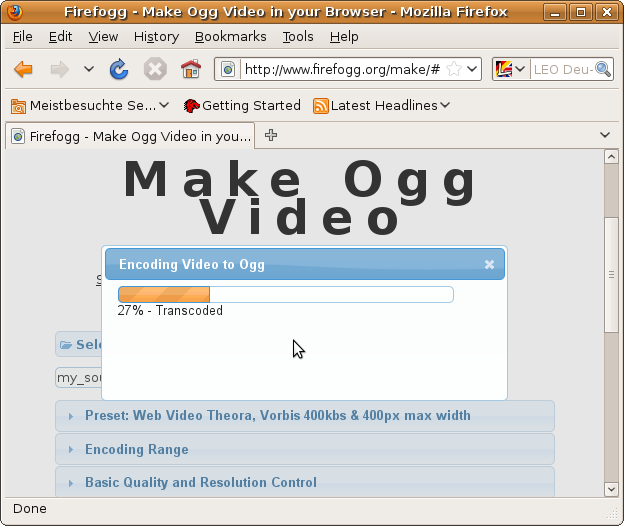

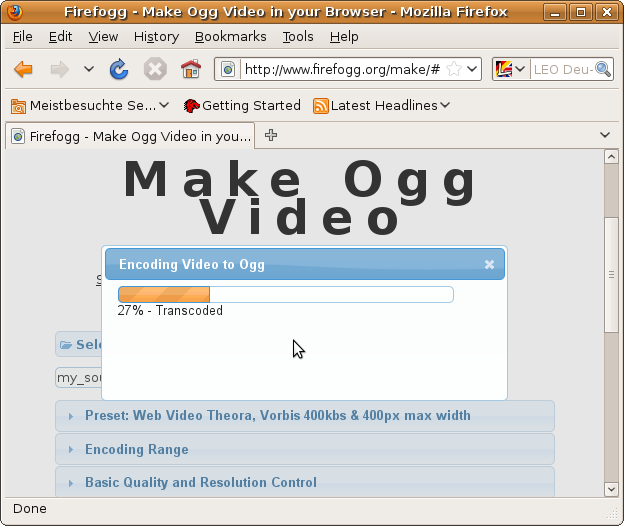

Encoding your first video

To encode videos with Firefogg, you need an internet connection. Parts of the encoding software reside on the internet and will not be installed to your computer.

The encoding software is started by visiting http://firefogg.org/make. The following site shows up:

Click on Select File, which pops up a file dialog allowing you to select the source video file to encode. In this example we are using the video my_source_video.mp4. After selecting the file, you are brought to a dialog that asks you for tuning encoding parameters:

For now, just select Save Ogg. You are asked to select the name of the Theora file to create. Remember. We select the name my_theora_video.ogv (remember, the correct file name extension for Theora videos is .ogv). Now encoding starts, using the default set of parameters:

Now wait for the encoding to progress to 100% and you're done.

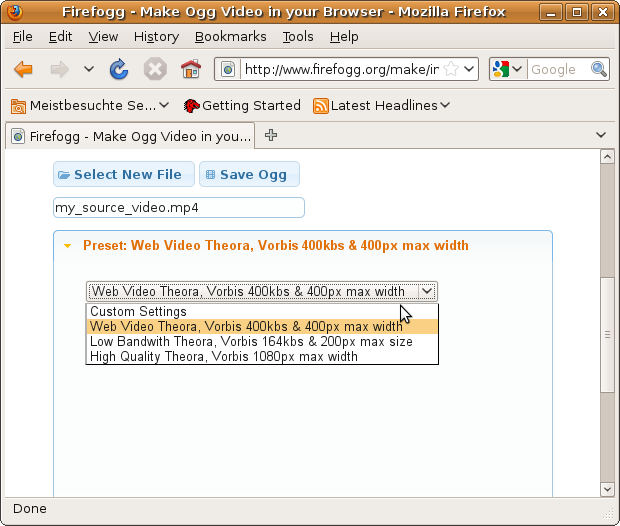

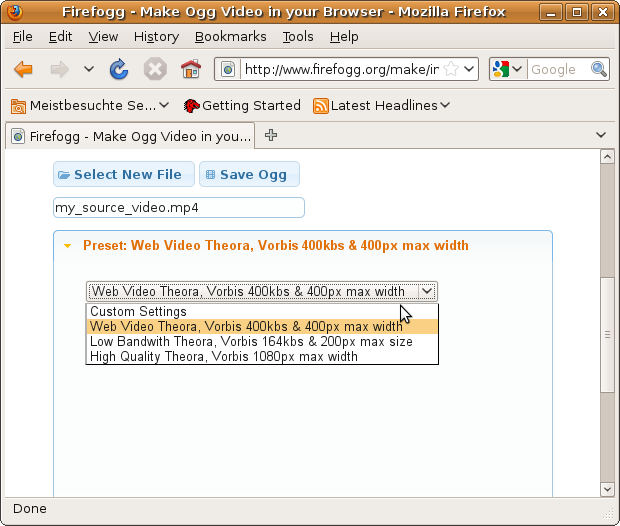

Advanced Encoding Options

Using the default set of parameters for encoding video yields very small Theora files optimized for web streaming. Perceived video quality will actually be quite low for most tastes. But wait, Firefogg is as advanced as most other Theora encoders. After a little tuning, very high quality videos can be easily created. Tuning options are available on the web page below the Save Ogg button.

The easiest way to configure encoding is by selecting one of three presets available from the Preset menu:

The first two presets called Web Video Theora and Low Bandwidth Theora are both optimized for streaming video over the internet. If you intend to play back the created Theora file solely from CDs, USB sticks or your computer's hard disk, you should try to not use them. Go for the High Quality Theora preset instead.

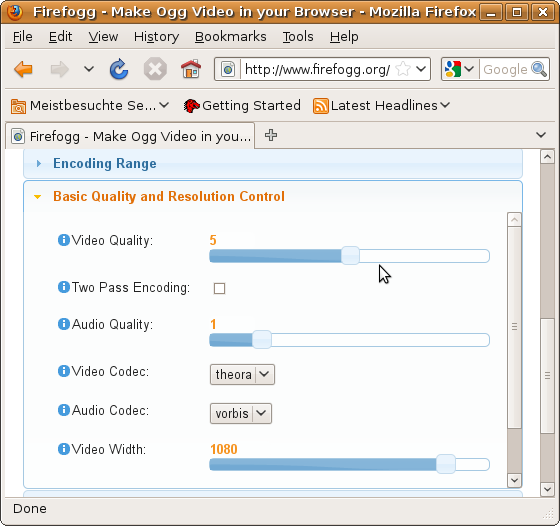

Custom Settings

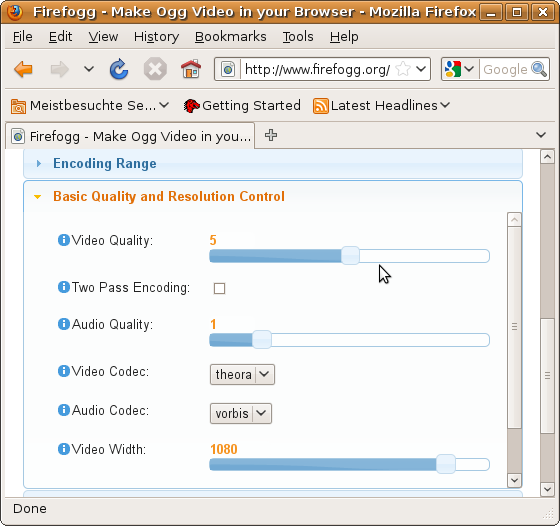

More details of the encoding process can be configured by selecting Custom Settings and manually adjusting lower-level options in the other available menus. Lets have a look at the most important menu, Basic Quality and Resolution Control:

Here you can control the quality of the created Theora file, and also choose to change the frame size of the encoded video. Encoding a video for a target quality (instead of a target bit rate) is the preferred encoding mode for Theora, so in most cases you won't want to try the encoding options in the other menus.

Other Uses of Firefogg

Once installed, Firefogg can be used on websites that support it. There is a list of sites that support Firefogg at http://firefogg.org/sites.

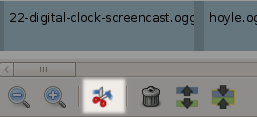

Encoding with VLC

The VLC media player (www.videolan.org) allows easy encoding of video files to Theora. Encoding can either be performed via the graphical user interface (GUI), or from the command line. The following instructions have been written for use with VLC version 1.0.1 running on Ubuntu. Other versions may differ in details, though the overall process will be the same.

Using the GUI

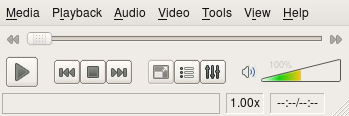

When you start VLC, you are immediately greeted by its main window:

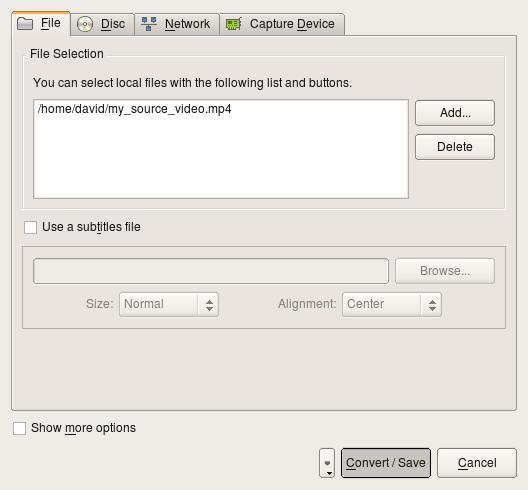

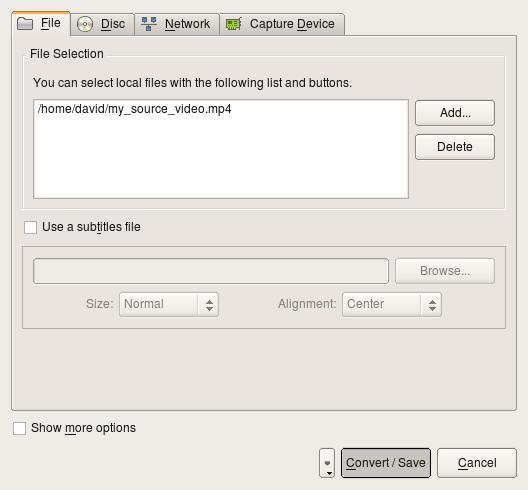

In the Media Menu select Convert/Save. This brings you to the following dialog:

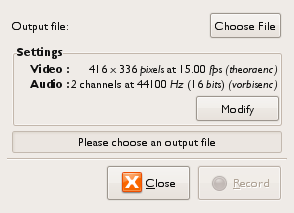

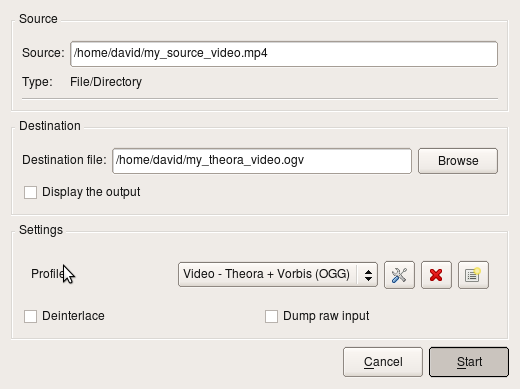

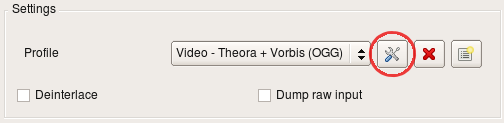

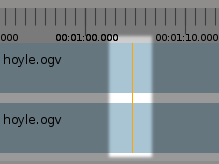

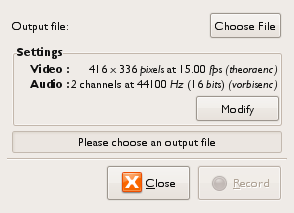

Under File Selection click Add and select the source video file for encoding.Then click on Convert/Save at the bottom. This leads you to the encoding dialog:

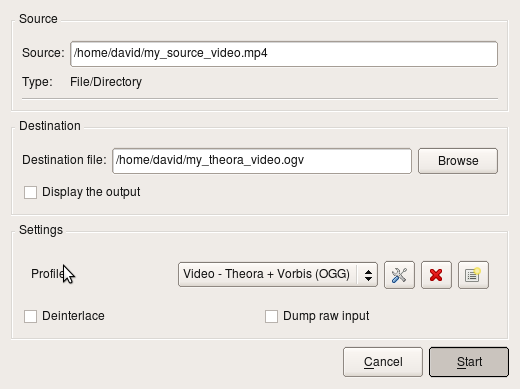

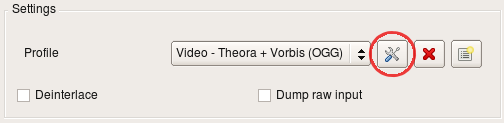

Under Destination click Browse and select the location and name of the Theora file that you want to create. Remember that the correct file name extension for a Theora file is .ogv. Under Settings set the Profile to "Video - Theora + Vorbis (OGG)".

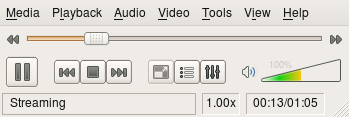

Then press the Start button. This starts the encoding process and brings you back to VLC's main dialog:

At the window's bottom, the text "Streaming" is now displayed. This indicates that it is busy encoding your file. The slider will slowly move to the right as encoding progresses. Once encoding is done, the slider jumps back to the left and the "Streaming" display disappears.

Advanced Options

If you are not satisfied with the encoding result, try adjusting some of the more advance encoding parameters. In the previous encoding dialog, before hitting Start, press the button with the "tool" icon, just to the left of the profile selection:

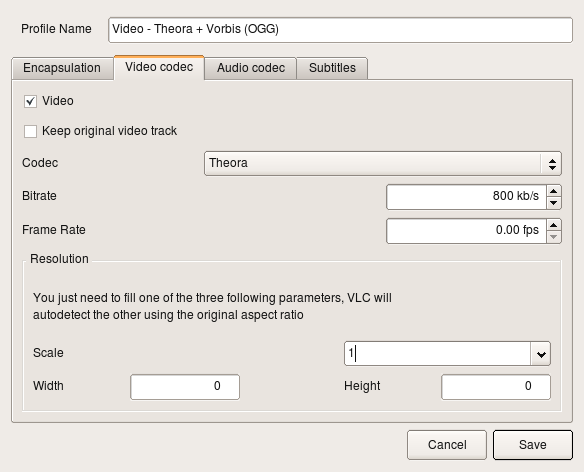

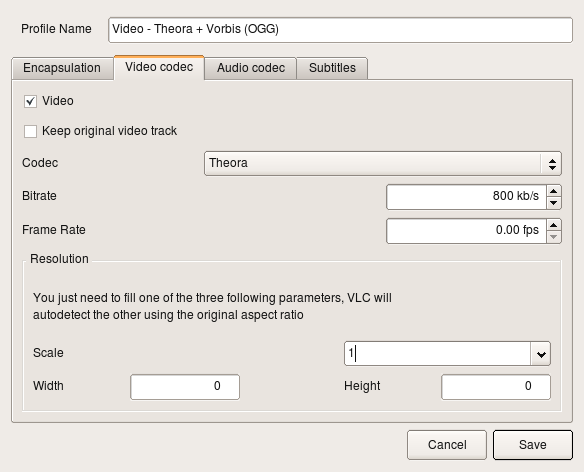

This opens up a new dialog, with the 4 tabs, labeled; "Encapsulation", "Video codec", "Audio codec" and "Subtitles". Make sure you do not change any parameters under "Encapsulation". The video encoding options you need are under the "Video codec" tab, shown below:

If your encoding result's video quality was too low, try increasing Bitrate. If your video source material has a very high resolution, try setting Scale to 0.5 to encode at half the original resolution. As of this writing, changing video resolution fails to work properly for some source material, when using VLC 1.0.1.

Encoding from the Command Line

If you are encoding a number of files to Theora, clicking through the VLC dialog windows can become tedious and error-prone. Here VLC's command line interface comes to the rescue. While not as intuitive as the GUI, it allows you to exactly repeat an encoding process with constant parameters.

Use the following command to encode your source video, (in the example below the files are called "my_source_video.mp4" to "my_theora_video.ogv" you can of course, use whatever name you want) with the same parameters as used in the GUI example above:

vlc my_source_video.mp4 \

--sout="#transcode{vcodec=theo,vb=800,scale=1,

deinterlace=0,acodec=vorb,ab=128,channels=2,\

samplerate=44100}:standard{access=file,mux=ogg,\

dst='my_theora_video.ogv'}"

All the parameters that had previously been specified in the advanced encoding options dialog window, are now given in text form. The only options whose meaning might not be immediately obvious are vb which means video bitrate and ab, which refers to the bitrate of the encoded audio.

Recommended Encoding Options

The command line shown above merely utilizes the parameter set provided by the GUI interface, which is in no way optimized for Theora encoding. We can do much better by using options that are exclusively available on the command line only. A better option might be to use the following command line as a basis for encoding. Tweak it to suit your needs:

vlc my_source_video.mp4 \

--sout-theora-quality=5 \

--sout-vorbis-quality=1 \

--sout="#transcode{venc=theora,vcodec=theo,\

scale=0.1,deinterlace=0,croptop=0,\

cropbottom=0,cropleft=0,cropright=0,\

acodec=vorb,channels=2,samplerate=44100}\

:standard{access=file,mux=ogg,\

dst='my_theora_video.ogv'}"

In this example video and audio quality are specified as numbers in the range 0 (low quality) up to 10 (highest quality). In case you want to remove black or noisy borders around the video, adjust the options croptop through to cropright.

These examples require that your VLC installation comes with the VLC Theora plugin. Verify the plugin's presence by typing:

vlc -p theora

Even if this prints out "No matching module found" it may still be possible to encode to Theora, by using the ffmpeg plugin that supports Theora as well. However, the advanced "--sout-theora-quality" option is not available with ffmpeg.

Why not to use the GUI

The Theora codec works best when encoding for a specified video quality. Encoding for a given target bitrate will always give inferior results for a file of the same size. Unfortunately there is no way to specify target video quality via the GUI, which is why you just shouldn't use it for any kind of professional encoding work. Also, note that you cannot crop borders of the video when encoding from the VLC GUI.

Keep in mind that this chapter was written using the 1.0.1 version of VLC, which was current at the time of this writing. Newer versions may outgrow these limitations.

⁞

ffmpeg2theora

ffmpeg2theora is a very advanced Theora encoding application. The advanced functionality comes at the price of having to use a command line, as no graphical user interface is provided. For GNU/Linux, Mac OS X and Windows download ffmpeg2theora from http://v2v.cc/~j/ffmpeg2theora/download.html. If you are running a recent version of GNU/Linux, chances are good that your distribution already comes with software packages for ffmpeg2theora, that can be installed with the distribution's software packet manager.

Basic Usage

Open a command prompt and enter:

ffmpeg2theora my_source_video.mp4 -o my_theora_video.ogv

This encodes the source video file "my_source_video.mp4", creating a new Theora video file named "my_theora_video.ogv".

Adding Parameters

When you are unhappy with the result of encoding, it's time to start tuning encoding parameters. We'll start with setting the video quality of the encoded video. Quality is given as a number in range 0 (lowest quality, smallest file) up to 10 (highest quality, largest file). Try this to encode at a high video quality of 9, and a very high audio quality of 6:

ffmpeg2theora my_source_video.mp4 -o my_theora_video.ogv \

--videoquality 9 --audioquality 6

The following example exposes the basic encoding parameters. Just copy-paste and adjust it to your needs:

ffmpeg2theora my_source_video.mp4 -o my_theora_video.ogv \

--videoquality 9 --audioquality 6 \

--croptop 0 --cropbottom 0 --cropleft 0 --cropright 0 \

--width 720 --height 576 \

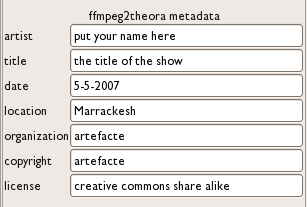

--title "Video Title" --artist "Artist Name" --date "1997-12-31"

If you do not wish to scale the video's frame size, drop the --width and --height options. There is no way to specify a scale factor, so check the input video's size and computing the target frame size as required. In most cases it is better to only specify one of the --width or --height options, the missing option is then automatically adjusted to a correct value.

Advanced Options

Ffmpeg2theora supports a multitude of other parameters for advanced use, which cannot all be described in detail here. To get an overview of all available options and short descriptions, type:

ffmpeg2theora --help

Depending on the operating system you are using, you might be able to open up the ffmpeg2theora manual by typing

man ffmpeg2theora

The following options often prove useful:

--sync

Copy any audio-video synchronization of the source video file to the destination Theora video. Depending on the source video used, this may fix problems of audio-video delay drift introduced by the encoding process.

--keyint <N>

Set the keyframe interval, i.e. the number of frames between keyframes, of the generated file. Large values of <N> lead to a reduced file size, however seeking and cutting does not work well with Theora files that have a large keyframe interval.

--framerate <N>

Set the frame rate of the generated video file. In case you are attempting to create Theora videos with extremely small file size, try specifying half the input video's framerate.

--starttime <N> --endtime <M>

These two options allow you to copy only a part of the source video when encoding. Specify <N> and <M> as the number of seconds from the start of the video.

Two-Pass Encoding

The upcoming version 0.25 of ffmpeg2theora is going to support a two-pass encoding mode, which is described in this section. Once version 0.25 is released, just download it from http://v2v.cc/~j/ffmpeg2theora/download.html. The examples given below are not going to work with older versions.

Why Two-Pass Encoding

A lot of hype is surrounding two-pass encoding. Many people assume that you need to encode in two passes to achieve a constant subjective quality throughtout a video. This is how it used to be for many non-free video codecs such as DivX. However, as we have seen, ffmpeg2theora is well capable of encoding for a constant target quality in a single pass using option --videoquality.

The only real advantage of using two-pass mode over using --videoquality, is the ability to create a Theora video of a given file size. Imagine you want to encode a video, which must fit onto a single CD with 700 MB of available storage. You want a constant video quality, but in advance you can't possibly guess which --videoquality will exactly hit 700 MB. Using two-pass mode exactly achieves that.

Using Two-Pass Mode

So you want to encode "my_source_video.mp4" into a Theora video, with a file size of exactly 700 MB. ffmpeg2theora does not allow you to directly specify the size of the encoded file. Instead you specify the average video bitrate for the video. Note that the audio is also going to require some data, which has to be taken into account.

To decide on an average video bitrate for the file we first need to find out the duration of the source video "my_source_video.mp4". Ffmpeg2theora can help us with that. Type:

ffmpeg2theora --info "my_source_video.mp4"

Which prints, among other information:

{

"duration": 2365.165100,

"bitrate": 6437.331055,

[..]

}

The duration shown is in seconds. If we divide the available 700 MB of space by the 2365 seconds to encode, we come to an average byte rate of 296 kByte/s. Multiplied by 8 we get the average bit rate of 2368 kBit/s.

We cannot use the full 2368 kBit/s for our video only. We also have an audio track, that is going to take 128 kBit/s. The average video bit rate we can use is thus 2240 kBit/s. Of this we subtract another 1% to account for any overhead in the encapsulation that is used to contain the video and audio tracks. This leaves us with 2218 kBit/s for the video and 128 kBit/s for the audio.

The following command is going to perform the two-passs encoding, creating the Theora video file "my_theora_video.ogv":

ffmpeg2theora my_source_video.mp4 -o my_theora_video.ogv \

--two-pass --videobitrate 2218 --audiobitrate 128

Note that unlike other two-pass encoders, only one invocation of the ffmpeg2theora command is required. If you require more control, performing cropping, scaling etc., feel free to copy-paste other options from the examples given in previous sections.

Thoggen

Thoggen (http://www.thoggen.net/) is a simple, easy to use DVD extraction program for GNU/Linux, to create Theora videos. Note that only the video part of DVDs can be encoded; any menus present on the DVD are going to be stripped out.

Encoding a DVD using Thoggen involves two steps:

- Selecting the DVD titles to extract and encode

- Configuring parameters of the encoding process

If you do not have Thoggen installed you will have to first do this of course. If you are running Ubuntu simply type this in a terminal window :

sudo apt-get install thoggen

You will be asked for your password, and then Thoggen will be installed.

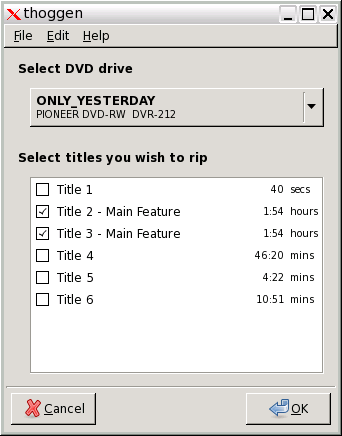

Selecting the DVD Titles to Encode

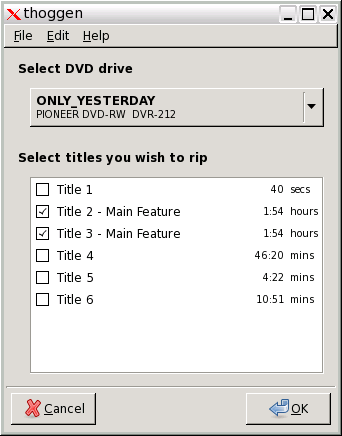

Upon starting, Thoggen will automatically detect any DVD media present in any DVD drive, presenting a list of titles it found on the DVD:

You are asked to select the titles that are going to be extracted and converted to Theora. The longest title is selected by default, as this is usually the main video. If this is not what you actually want, change it to suit your needs.

Note that you may also convert from a DVD image on your hard drive, instead of directly from a DVD. To do this select the image location from the 'File' menu. The same list of titles will then be displayed, as if it was taken directly from the physical DVD.

When done selecting, press OK and you will be taken to the next dialog.

Configuring the Encoding Process

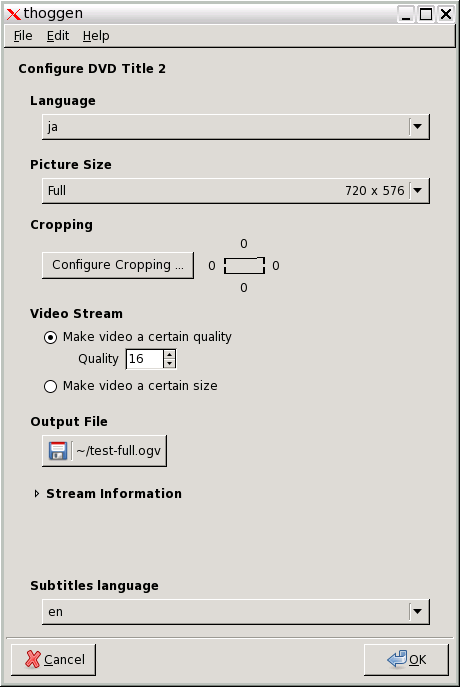

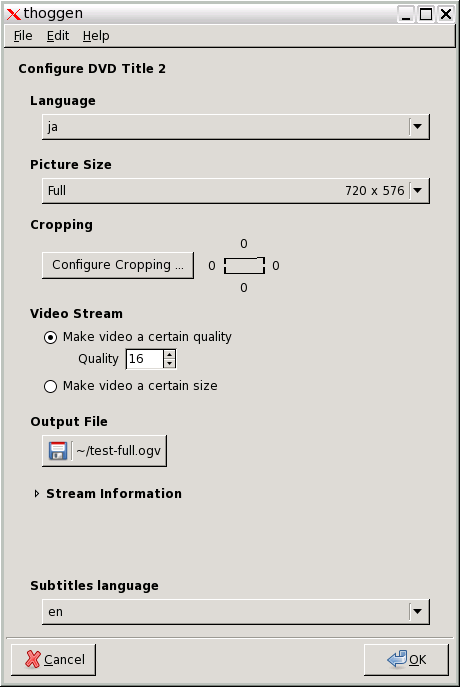

The following dialog now allows you to specify parameters of the encoding process:

If you selected more than one video in the previous step, this window will be presented once for each of them. For multi-language DVDs use this dialog to select which languages to include. Select the quality and video size, and where to save the video. If unsure which option to choose, just go with the defaults. Note that video quality is specified as a number in range 1 (lowest quality, smallest output file) to 63 (highest quality, largest file)

When you are happy with the settings, press OK, to start the encoding. You are then presented with a slideshow preview of the video along with a progress bar, while waiting for the encoding process to finish.

You can now use VLC, or any other player supporting Theora, to view your copy of the DVD, without having to actually search for and insert the DVD into the drive whenever you want to watch your movie.

A Note on Cropping

Thoggen can remove borders at the left, right, top and bottom when encoding the DVD, available from the Configure Cropping button. It is often actually a good idea to remove about 5% the movie's width and height. This is due to the fact that DVDs are manufactured with ancient analog televisions in mind, hence there are usually borders around the movie. Older DVDs sometimes contain noise and artifacts in the movie borders, only visible when playing the DVD on a PC.

For a typical DVD resolution of 720x576 pixels, try cropping 8 pixels on the left, right, top and bottom, leaving a total frame size of 704x560.

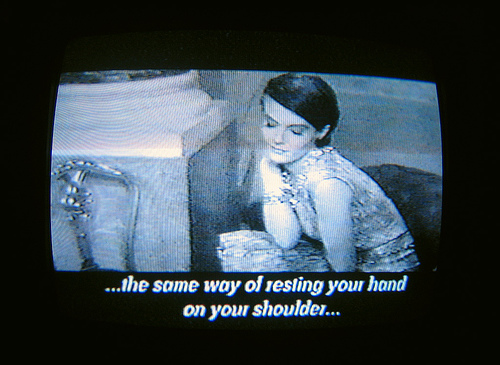

Subtitles

Most videos include people speaking in a certain language; to make these video accessible and understandable to a global audience the video must be subtitled or dubbed. Subtitles are by far the easiest to produce: audio dubbing requires time and software expertise, but you can create subtitles with just a video player and a text editor.

Finding Subtitles

Before starting a subtitling translation project, it's worth searching for existing subtitles, particularly if the video is a well-known or commercial work. For example, if you are including a scene from an American documentary in a video, there are resources to search for subtitles for this material. However, outside of well-known video and films, pre-created subtitles are rare, and subtitles available under an open license are even harder to find.

There are a few issues that come up when searching for subtitles. For cinematic films, for example, there are almost invariably many different versions of the film. One can imagine that any extra scene, extended title sequence, or formatting change can alter the timing of subtitles onscreen which many times renders subtitles useless. Therefore, it is important to find subtitles that are accurate for the audio of the particular film version. There are free software tools like Sub Downloader (http://www.subdownloader.net/) that help with this problem by matching subtitle sets to specific film versions. Another issue that comes up is the file format of the subtitle file itself. There are different formats for different types of video as well as different types of physical media (HD, DVD, Blu Ray etc.) which affect the selection of subtitles for a given piece of film.

The following are resources for finding subtitles :

Subtitle File Formats

A subtitle file format specifies the format of a file (text or image) containing the subtitle and timing information. Some text-based formats also allow for specifying styling information, such as colors or location of the subtitle.

Some subtitle file formats are:

- Micro DVD (.sub) - a text-based format, with video frame timing, and no text styling

- Sub Rip (.srt) - a text-based format, with video duration timing, and no text styling

- VOB Sub (.sub, .idx) - an image-based format, generally used in DVDs

- Sub Station Alpha / Advanced Sub Station (.ssa, .ass) - a text-based format with video duration timing, and text styling and metadata information attributes.

- Sub Viewer (.sub) - a text-based format with video duration timing, text styling and metadata information attributes.

We will only focus on the subtitle format Sub Rip (.srt), which is supported by most software video players and subtitle creation programs. SRT files can also be created and edited by text editors, or more specialised software like Jubler, GnomeSubtitle, Gaupol and SubtitleEditor.

Editing SRT files

An SRT subtitle file is just a text file that is formatted in a simple way so the player can read it and co-relate subtitles to the time they should be played in the video. SRT is a very simple, widely used subtitle format. If you find an existing SRT file for the video you need to subtitle, it's easy to create subtitles for other languages when you know how an SRT file works.

A SRT file is made of a list of lines looking like this:

1

00:03:05,260 --> 00:03:07,920

Hello, world.

The first line is the number of the subtitle, incrementing from 1 to as many as needed. The second line is the time at which the subtitle appears and disappears in the video, and is recorded in hours:minutes:seconds,milliseconds. The third line, and any lines after it up to the first blank line, are the subtitle text. One blank line is required to mark the end of the subtitle text. You can add as many such triplets as you need for the remaining subtitles.

For the above example, it just means that the first subtitle shows up at 3 minutes 5.26 seconds into the video, disappears at 3 minutes and 7.92 seconds, and reads "Hello, world". That's it.

Creating subtitles from scratch

To create a subtitle file from scratch, you may want a more advanced tool that makes it easier to assign subtitles to specific points in a video. Jubler, GnomeSubtitle, Gaupol and SubtitleEditor are all free software tools, and worth checking out.

FLOSS Manuals has created a complete guide to Jubler (which is both free software and cross-platform). You can find the guide here: http://en.flossmanuals.net/jubler

Distribution

Using a subtitle format like .SRT means that you can distribute subtitles for many different languages without distributing a different version of your video for each language. You just need to make separate .SRT subtitle files for every required language, and make those files available on the web.

This strategy is very common in the world of subtitles. Including the subtitles as a separate files allows that file to be accessed, changed, or removed without affecting the video file itself. The disadvantage of this technique is that the subtitle file format becomes an issue. Players must accept the format in order to properly display the subtitles. And users must know a little bit about how subtitles work in order to play the subtitle files correctly. If you're distributing .SRT files with a downloadable video, be sure to include some instructions on how to retrieve and play the subtitles. If you're distributing video on the web, you can use HTML5 and javascript to offer different subtitle tracks on your webpage.

Its also possible to explore embedding multiple .srt files within the video file itself. This provides the user with the option to choose from among the translations you make available (or to display no subtitles at all) without the need for additional subtitle files. Patent-unencumbered video container formats that support this include Matroska Multimedia Container (MKV) and the Ogg container format.

Embedding Subtitles

If you want your video file to contain a subtitle file, so you don't have distribute the .srt file separately, you need to embed the subtitle file into the video. The video encoding tool ffmpeg2theora has a few command line options to include subtitles in your video.

ffmpeg2theora us available for most operating systems, including Windows, Mac OS X, and GNU/Linux.

Related to subtitles three commands are important:

- --subtitles pointing to a subtitle file in SRT format,

- --subtitles-language to define the language of the subtitles

- --subtitles-encoding to specify the character set of the subtitles file used.

Lets have a look at some of the required options for using ffmpe2theora for embedding srt files in a Theora video file.

subtitles-language - This option sets the specified language. Every language has a standard code, which helps people describe a language, whatever their own language. For example, in English the language spoken in Germany is called German, but in Germany, it's called Deutsch. To prevent confusion, there is an international standard (ISO 639-1) that represents each language with a two letter code. In our example, the code for German is 'de'.

subtitles-encoding - This option specifies the encoding standard for text, a complexity necessary given varying strategies for representing the wide range of characters used by all languages on earth. For a long time, computers used 7-bit character sets of 127 characters to represent the alphabet and other writing symbols. For example, US-ASCII has 94 printing characters and 33 control codes. Numerous 8-bit character sets, with 256 codes, have appeared since then for alphabets and syllabaries, and several encoding systems using 16-bits for writing systems based on Chinese characters. However, 7 or even 8 bits is not enough space for all the typographical symbols in even one alphabet, much less for the dozens of writing systems in use today. People created the Unicode Character Set to support all languages at once. The UTF-8 encoding of Unicode is specified for use "on the wire", that is, in all external communications between systems.

However, a lot of people still use old encodings. The bad thing about these is that they overlap, using the same set of codes for completely different characters. The usual result of rendering a text according to an incorrect encoding is gibberish. So, by default, subtitles are expected to be in Unicode UTF-8 encoding. If they are not, you need to tell ffmpeg2theora. If you're writing in English, chances are you'll be writing in ASCII, ISO-8859-1 (Latin-1), or possibly Windows code page 1252. By design, US-ASCII is a subset of UTF-8, so you'll be OK there, but you will get into trouble if you use any extension of ASCII in a Unicode context.

Example commands for subtitle embedding

Here are a few examples that take an existing mp4 video file (input.mp4) and output a ogg video file (output.ogg) with embedded subtitles :

If you have a subtitles file in English (the language code for English is 'en'):

ffmpeg2theora input.mp4 --subtitles english-subtitles.srt --subtitles-language en -o output.ogv

If you have a subtitles file in Spanish, encoded in latin1 :

ffmpeg2theora input.mp4 --subtitles spanish.srt --subtitles-language es --subtitles-encoding latin1 -o output.ogv

There are other subtitles options for ffmpeg2theora, but these are the main ones.

Adding subtitles to an existing video

If you have a Theora video with no embedded subtitles, it's easy to add some too, without the need to encode the video again. Since each subtitles language is stored in the Ogg file separately, they can be manipulated separately.

Internally, subtitles embedded in an Ogg file are encoded as Kate streams. Such streams are created by ffmpeg2theora, but can also be created 'raw' from a SRT file. The kateenc tool does this. On Ubuntu kateenc is part of the kate-tools package. To install do this:

sudo apt-get install libkate-tools

For instance, the following creates a new English subtitles stream from a SRT file. Remember, the code for English is 'en':

kateenc -t srt -o english-subtitles.ogg english.srt -c SUB -l en

Now you've got a single subtitles stream, which you can add to your Theora video:

oggz-merge -o video-with-subtitles.ogv original-video.ogv english-subtitles.og

On Ubuntu oggz-merge is part of the oggz tools package, to install, do this:

sudo apt-get install oggz-tools

In fact, the oggz tools allow more more powerful manipulation of all the different tracks in the video, so you can add more audio languages too, etc.

Playing Subtitles

There are several easy ways to play back subtitles with an Ogg Theora video.

VLC

First, install VLC from the website (http://videolan.org/vlc) if you haven't already. These instructions assume you have a file or DVD with subtitles which you want to display while you are playing the Video.

There are three ways you may want to use VLC to display subtitles.

- From a DVD

- From a Multilingual file (ie Matroska)

- From a separate subtitle file which is distributed with the Video file.

Play subtitles on a DVD disk

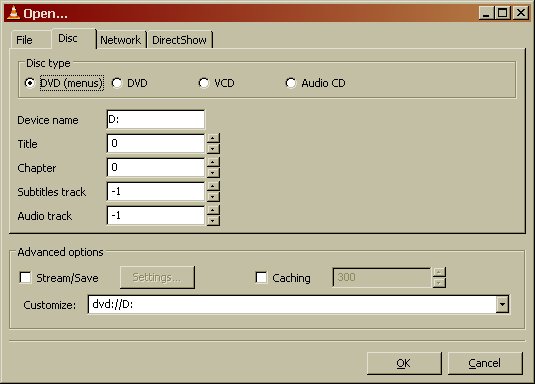

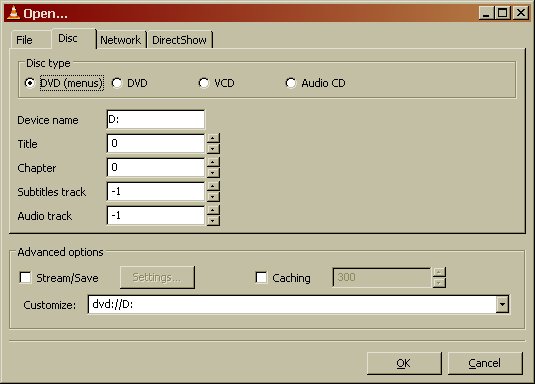

To do this put the DVD disk into your DVD drive. Open up VLC player and select File > Open Disk.

Enter the DVD Drive letter. It may appear automatically. On Windows this may be drive D:, and on GNU/Linux something like /media/dvd.

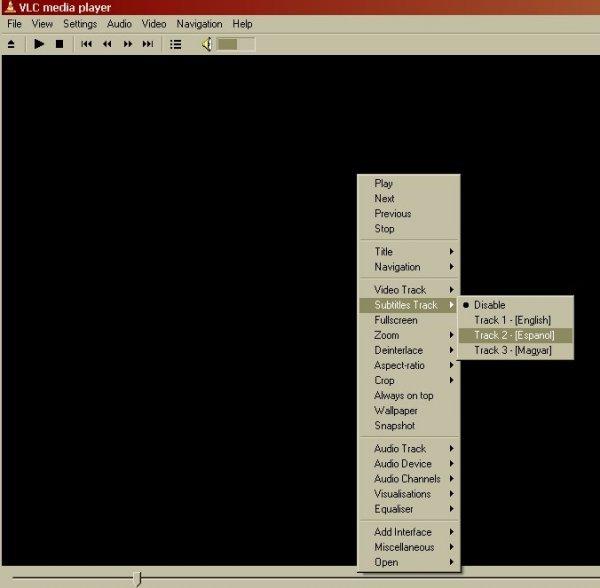

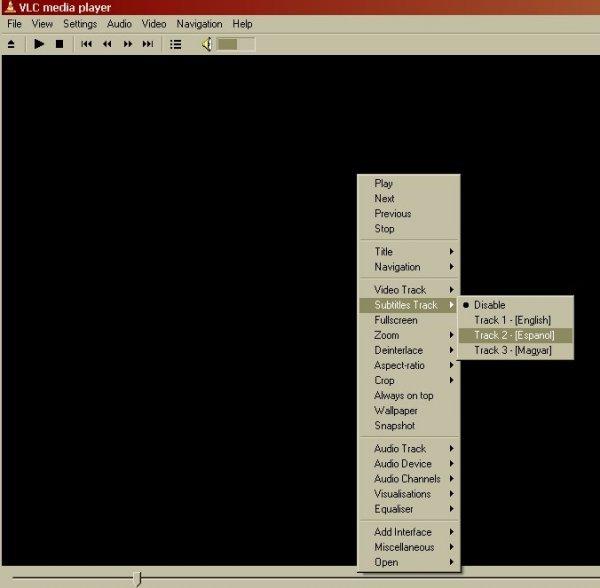

Then click OK. The menu page of your DVD should appear. Click on the video you want to watch. Then when the video starts quickly right hand click the mouse on the Video image. Select the Subtitle track you wish to view.

The subtitles should then appear on screen.

Play subtitles in Matroska files

The process for this is exactly the same as above except when starting the process you select File > Open File. You then see this screen.

You should then click on the Browse button to select the video file you want to play. If this file is a matroska file with an *.mkv extension then you can click OK after browsing for the file as the file already has the subtitle infomation.

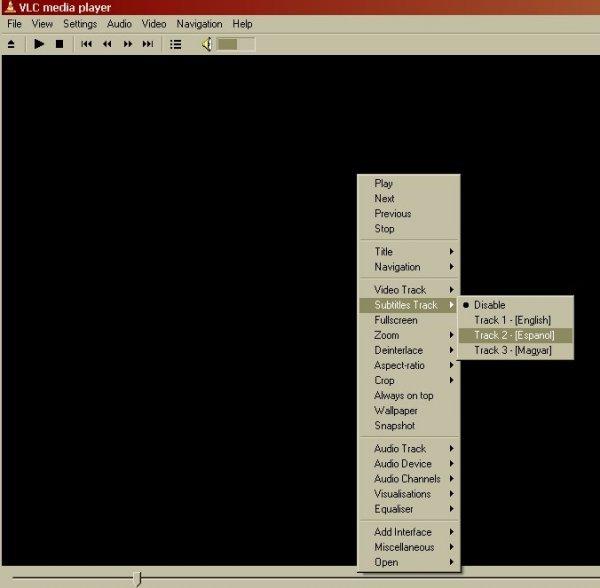

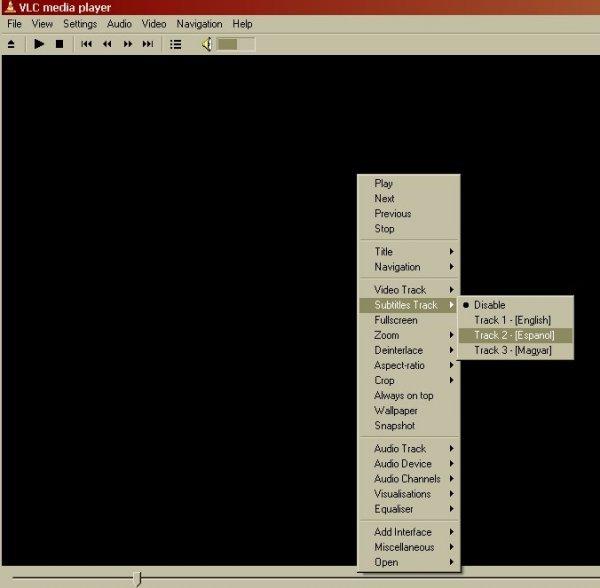

Then Select the subtitle language stream by right clicking the video screen and selecting Subtitle Track > and choose the language

Play External Subtitles

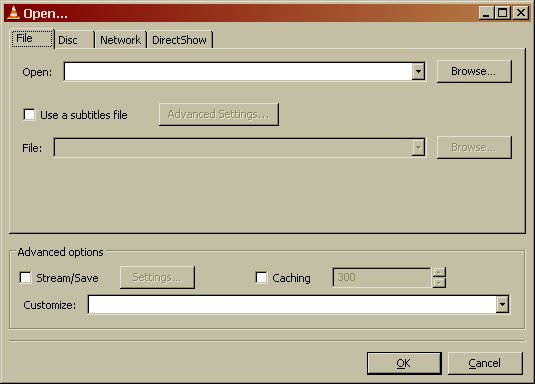

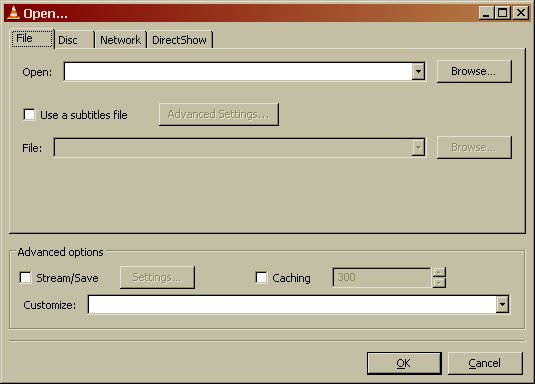

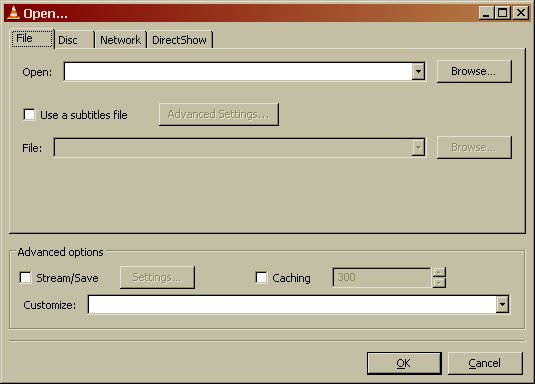

If you want to play an external subtitle file for example a srt file. Select File > Open File

In the Open box click the Browse button and choose your video file.

Then put a tick in the box Use a subtitle file, and click Browse to locate your external subtitle file.

Then Select the subtitle language stream by right clicking the video screen and selecting Subtitle Track > and select the track of subtitles (for an external file like an srt file there will normally only be one track).

Publishing

Depending on how you created the subtitles - embedded in the video or as a separate .srt file - you have different options to publish it online with your video.

Hosting external subtitle files along with video on a web server

We will assume you have one or more subtitles (in SRT format) and the video itself.

HTML5 video tag and Javascript

You can offer a web preview of Theora video alongside a given .srt with the help of jquery.srt.

Firstly, notice below that we will integrate JQuery, a popular GPL Javascript library (http://jquery.com/), and an example Javascript implementation of displaying subtitles in a webpage from a SRT file available at: http://v2v.cc/~j/jquery.srt/jquery.srt.js

A simple HTML document excerpt is shown below, which includes the modification to include the Javascript files and to reference to your subtitle file. Only one subtitle file can be referenced at a time, unless you start developing further with Javascript.

It is a small script that can load an srt file and display it inside a div on your page, under the video or as an overlay.

<script type="text/javascript" src="../jquery.js"></script>

<script type="text/javascript" src="../jquery.srt.js"></script>

<video src="../http://example.com/video.ogv" id="video" controls>

</video>

<div class="srt"

data-video="video"

data-srt="http://example.com/video.srt" />

this example could look like this on your page.

For another example using subtitles in several languages, you can have a look at this demo from Mozilla http://people.mozilla.com/~prouget/demos/srt/index2.xhtml

Another example using multiple subtitles and providing an interface to select them can be found at http://www.annodex.net/~silvia/itext/.

Hosting video with embedded subtitles on a web server

If you have Ogg files with embedded subtitles and want to display those in the browser, you can use the video tag right now. However, not all browsers support the video tag. Cortado, a Java plugin that can play Theora videos, also has support for embedded subtitles, and it works in all browsers that have the Java installed. You can get the latest version of Cortado from http://www.theora.org/cortado/

<applet code="com.fluendo.player.Cortado.class" archive="cortado.jar"

width="512" height="288">

<param name="url" value="video.ogv"/>

<param name="kateLanguage" value="en">

</applet>

To use subtitles with Cortado. you pass it the url to the video with embedded subtitles and in the same way you pass a parameter for the subtitles track to use. The easiest way is to select the language you want via the kateLanguage parameter:

<param name="kateLanguage" value="en">

It is also possible to change or disable the subtitle via Javascript. If you want to switch to French subtitles, you could set the kateLanguage option from your script by calling:

document.applets[0].setParam("kateLanguage", "fr");

Web Video Accessibility

When we talk about Web video here, we explicitly refer to video published in Ogg Theora/Vorbis format inside a Web browser that supports the HTML5 video element.

Accessibility of video refers to several different aspects of usability of video, depending on what user group we are looking at. So, before diving into the different aspects and how they can be supported, we list the user groups and their specific requirements.

Accessibility user groups